If you’ve happened to walk around East London lately you might have run into a little project we developed as part of the Make Hackney Sparkle program. We lovingly call it “Hackney Xmas Projections”.

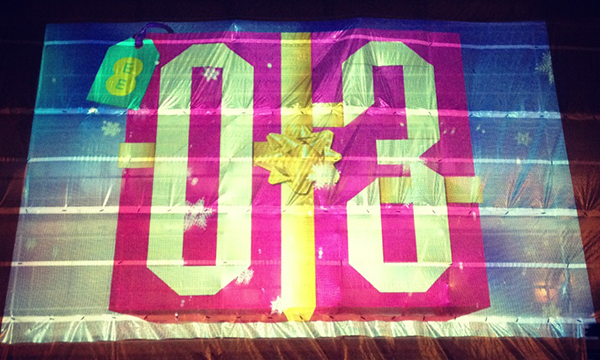

It’s a very simple advent calendar projected in Great Eastern Street where local businesses from Hackney give away a special offer every day over the 24 days of Christmas. This post is a technical overview of how we have done it, so if you are not up for technical chit-chat either feel free to run to your festive celebrations now, or enjoy the offer you get from opening the calendar yourself.

Requirements

These were the initial requirements, but keep in mind that they were open to say the least, i.e., we needed to be flexible (more than usual!):

- The calendar needed to run 24/7 for roughly a month in several locations (eventually whittled down to only one).

- Content needed to be easily updated. We didn’t know whether all the content would be available before release day (December 1st), so we needed to cater for content updates.

- The application needed to be updateable. This wasn’t a business requirement, but a technical one in case we needed to deploy new versions of the app to fix bugs.

- We needed to cater for an unreliable Internet connection. It wasn’t known whether we would have a full ethernet or wifi network available, a 3G dongle or nothing. The app needed to work with no Internet at all (yes, this conflicts with the content and app update requirements, it was a clear trade off: no network = no updates).

- The app needed to be interactive. This didn’t make it into the end product, but there were discussions about allowing passers-by to somehow interact with the projection, either by a FourSquare check-in, an SMS message or similar.

A Flash / AIR application would totally fit the bill for these requirements and we could have knocked the project off in less than a week, but when we get these kind of small projects we like to use them to learn something new. In this case we chose Haxe, something that is definitely not new for us, but that we have never used for client work. To be more precise, we have used Haxe in client work, but to build development utilities, not user facing applications.

Playing to the almighty cross-platform capabilities of Haxe, we designed the code in a way that could be easily compiled to different platforms. We have kept as much code as possible platform independent using “pure” Haxe (all the business logic, accessing data, unit tests, etc) and put all the rendering behind an IRender interface.

This made a theoretical Plan B escape route a a lot simpler, in case it was ever required. To be clear, compile to another platform only requires coding a view for it, implementing a (rather minimal) interface. Depending on the platform we calculated this would roughly be a day’s work.

The hardware

The whole thing runs on an off-the-shelf Mac Mini, nothing special about it. We disabled services that can give you a nasty surprise down the line such as system updates, automatic Bluetooth configuration, etc.

We also had automatic login so the Mini goes from boot-up to application running without human intervention (the application is kept alive and launched using launchd, see below for more info).

As for the actual projections we are using a single EPSON Z8350 projector.

We let OSX take care of keeping our applications running at all times by implementing a couple of launchd tasks. The only trick here is passing all the required environment variables expected, since a launchd task doesn’t have access to them by default.

The projection application

The public facing application that takes care of the projections is a native C++ OSX application running fullscreen. Most of it is vanilla Haxe code, but all the visible stuff is done using hxOpenFrameworks (the Haxe bindings for OpenFrameworks).

So, we code Haxe, compile it to C++ code and finally compile that C++ code to native OSX. All of this using the Haxe C++ build chain.

We chose hxOpenFrameworks over NME because there is no video API for NME yet. The only problem we found was playing videos, which caused a big memory leak. We think this is somewhere in hxOpenFrameworks, but we couldn’t figure it out.

At the end we found a workaround that improved the application lifetime from 12 hours to the range of several months (current uptime is now over 10 days, memory well within expected and acceptable limits).

The helper service

To simplify the client facing application as much as possible we built an standalone Neko application to take care of the invisible stuff.

It monitors the state of the main application in terms of memory and sanity checks (pings it, waits for success response back). If either of these checks are not within expected range, it raises an alert (see logging and warning service below).

The helper service also takes care of content updates. We use a GIT repository to hold the content, including helper and main apps. This allows to have dev, stage and prod versions of the content mapped to GIT branches.

Updates are a matter of push and pull operations and the need for an update is indicated by the remote repository being on a different commit that the local one—this makes rollbacks a piece of cake too.

Updates are atomic using symlinks to the local repository folders (each commit has its own folder, named using the commit hash), i.e. we don’t switch to the new content until the pull has finished. Then, and only then do we change a symlink from the current folder to the new one.

The logging and warning backend service

We have learnt that with unattended, remote applications when it comes to logging more is more. Also, since we were not going to be able to remotely SSH into the machines or VNC to them, we needed to send those logs to a remote server (Ruby on Rails hosted by the nice Heroku guys).

The backend service not only allows us to go through the session logs of a particular machine, it is also a proactive system:

It notifies by email a bunch of people if it receives a log entry over a threshold of WARNING

It also notifies if a machine doesn’t call home for more than 5 minutes (this might not be a bad per-se, but something worth investigating).

And that’s about it! We are quite happy with the result, too bad a few of the features didn’t make it to the final build. Maybe next year!

Let us know what you think about the whole setup, whether you have tried anything similar using Haxe and if not Haxe, what and why.

Last, we would like to thank the Haxe community, particularly the authors of hxcpp, hxOpenFrameworks, MSignal and XAPI (although that’s me!).

THANKS!